Blog Analytics with AWStats

I’ve used quite a few different web hosting providers over the years, and most of them offered some kind of log file analysis tool. As part of my recent blogging renaissance, I did some exploration in to how I could measure website traffic for this blog.

This entry will cover some history, as well as a brief overview of how I set things up on my server.

Log Analysis History

Before the days of tracking cookies, analytics platforms, and privacy-hostile website measurement, website maintainers used log file analysis tools to learn about their visitors.

Web servers are typically configured to log every request they serve. These logs are very basic - just plain text files that capture information about every single request, delimited by pre-defined columns. Using the data in these columns, it’s possible to learn which pages a visitor viewed, where they came from, what kind of browser they used, and approximately where they are located geographically.

Here’s an example of an entry from the log for this site:

1 | xx.xx.178.144 - - [02/Nov/2022:05:15:37 +0000] "GET /2022/11/01/twitter-exports/ HTTP/1.1" 200 2407 "https://www.google.com/" "Mozilla/5.0 (Windows NT 6.3; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.132 Safari/537.36" |

(I’ve masked part of the IP address for the sake of the anonymous visitor’s privacy)

If you look at that log entry, you might be able to deduce that there’s an IP address, a date, a URL and HTTP request method. After that, it gets a little harder to decode if you’re unfamiliar with these types of files.[1]

Based on only this information, it’s possible to aggregate visitors by date, popular pages, common browsers, and geographic location of visitors.

Which is exactly what AWStats does.

AWStats Background

AWStats is one of those old-school open source projects that feels like it has been around forever and continues to fulfill its promise exquisitely, which is absolutely true. The first version was released in 2000, and has been a staple of most Linux-based managed hosting services since.

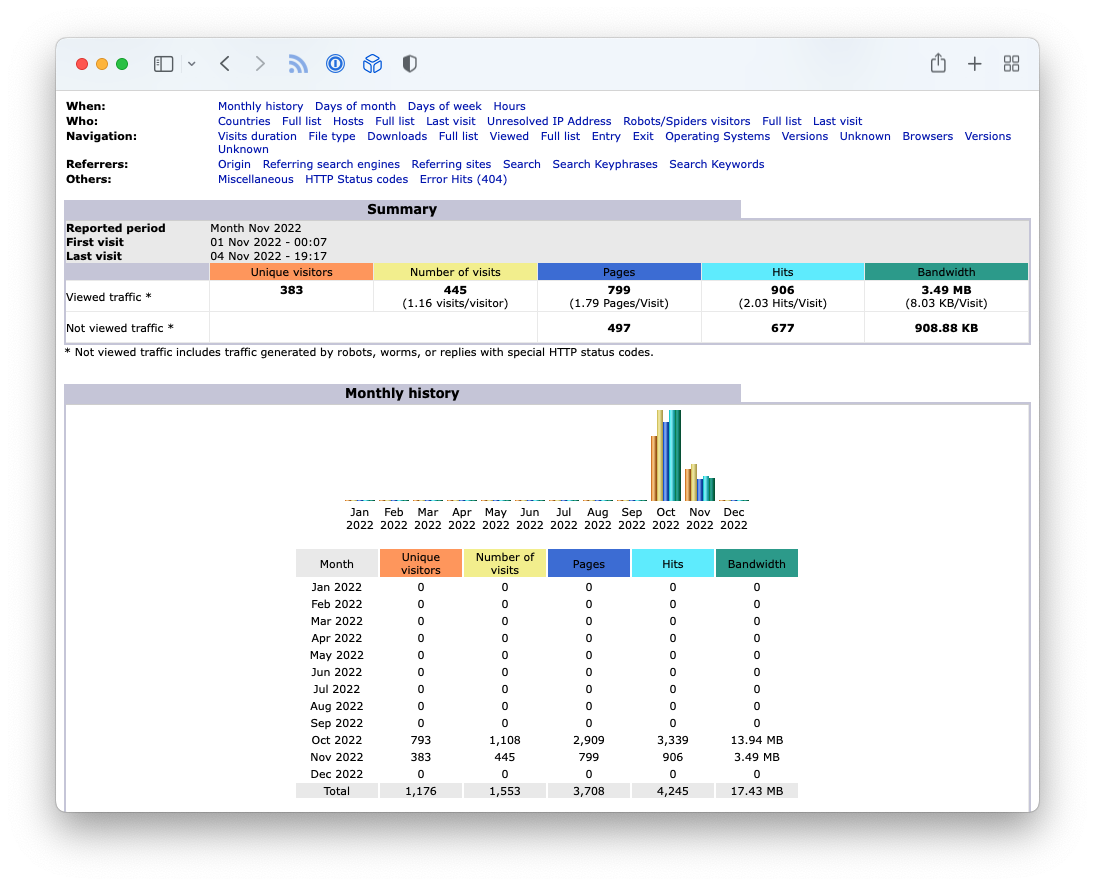

What I really love about AWStats is that it’s pretty easy to set up and the analysis it produces is really useful. It isn’t the most beautiful by today’s design standards, but it’s information-dense, with enough visual treatment to make it easy to use.

It also doesn’t require that I change anything on my website. There’s no Javascript and no cookies, which aligns well with how I want my sites to work.

Surprisingly, I’ve never set it up by hand before; AWStats has always just been a link in a cPanel on a virtual host I was using. However, I host my websites on a DigitalOcean (referral link) Droplet running Ubuntu these days, so it’s a little more hands-on.

AWStats Setup

Depending on your operating system and package manager, these instructions may differ slightly for you. Here are a few details about my setup:

- Ubuntu 18.04 LTS

- Nginx 1.14

Installation

First, you’ll need to install awstats and a few supporting libraries:

1 | $ sudo apt install awstats libgeo-ip-perl libgeo-ipfree-perl |

AWStats is written in Perl and uses a few additional modules to perform IP-related lookups. Some how-tos online mention additional steps for installing those Perl modules, but they’re available in Ubuntu through apt, so that one-liner should be all you need.

Once that completes completes, the tool is installed, but there’s more work to do. AWStats is just a Perl script, and does not have a service or daemon that runs continuously. It’s up to you to configure how you’d like to use AWStats.

Most people use AWStats in one of two ways:

- Dynamically, requested through a web server (with old-school

cgi-binstyle requests) - With cron, generating static reports

I opted for the second, since I have prioritized hosting static content on my server.

Site Configuration

The first thing we need to do is configure a site. AWStats configuration on Ubuntu utilizes a traditional package/distro/local hierarchy. The configuration files are layed out like this:

1 | $ tree /etc/awstats |

Here’s how this works: awstats.conf provides the default configuration from the upstream project and Ubuntu package maintainers. Future versions may overwrite that file, so local server-wide changes should be made in awstats.conf.local, which will not be updated by future package updates. Finally, site-specific configuration lives in the awstats.<site name>.conf file.

This model creates a logical hierarchy for configuration:

1 | awstats.conf - package defaults |

The beauty of this design shines through when you look at the details more closely. Let’s start with the configuration of an individual site:

1 | # /etc/awstats/awstats.th.adde.us.conf |

Here’s a brief description of the configuration directives:[2]

- Include: starts the configuration hierarchy mentioned above

- LogFile: specifies where the nginx log is located for this site

- SiteDomain: specifies the site’s domain name

That’s it for per-site configuration. Each site inherits a set of local server configuration values because it’s actually overriding other configuration values from the level above.

The next level up is the local configuration, which applies to all sites hosted on this local server. This is where most of the customization happens for me. Here’s what my file looks like:

1 | # /etc/awstats/awstats.conf.local |

A few notes:

- I opted to specify

LogFormatexplicitly; I’m using the default nginx format and didn’t want to change nginx configuration (as many how-tos suggest to do). The traditional combined / apache style log may work fine, but being explicit feels safer. - I set

DirDatato a directory that is closer to where my sites live, instead of the default/var/lib/awstats. This will make it easier to encapsulate my data for backups, etc. - All of those

Show...values are to ensure static pages get built for each of the data points. The serverfault link in the comment is where I learned that trick.

Processing the Logs

Whew! 😅

With the server and site-level configuration files in place, now we need to run the tool and generate data.

You need to run awstats.pl per site configuration you define. This will process the log files according to the site configuration, and store working data in the location defined by DirData. You can think of that data as the scratchpad that AWStats uses for other tasks.

The developers of AWStats have provided a nice wrapper script to enumerate the site configs and run awstats.pl for each site:

1 | $ /usr/share/doc/awstats/examples/awstats_updateall.pl now -awstatsprog=/usr/lib/cgi-bin/awstats.pl |

The -awstatsprog= parameter is the location of the awstats.pl script (remember when I mentioned cgi-bin… yep, that’s the old school peeking through). It doesn’t assume a certain location of the Perl script, so you need to provide it.

Since I also want to generate static html pages, I bundled this all up into a shell script:

1 | #!/bin/bash |

And scheduled it with cron:

1 | # awstats (daily @ 1am) |

Each time this job runs, it produces updated reports in the output directory I provided to awstats_buildstaticpages.pl.

1 | $ ls |

Viewing one of these files produces a page like this:

If you made it this far, congratulations! 🎉

Log Rotation

Soooo… my example site configuration from earlier… has the following value for LogFile:

1 | LogFile="/var/log/nginx/access-th.adde.us.log" |

“That… that was a lie. I apologize for that.”

Server logs can get pretty big after a while, so most distros include mechanisms to rotate log files periodically.

When logs are rotated, they’re typically renamed in the same folder, adding a number to the end (and shifting exist logs down). Here’s what my log directory looks like:

1 | $ tree /var/log/nginx/ |

So not only to my files get renamed with a dot-number suffix, but they also get gzipped after the second rotation. Well… that’s to allow log analysis tools to process the current log and the next most recent file. Depending on when the logs are rotated, there can be gaps if not handled carefully. Like many other analysis tools, AWStats allows for re-processing of old files, and can properly avoid duplicating data. The general convention is to process the most-recently rotated file, along with the current file

Fortunately, AWStats ships with a really handy tool to deal with that called logresolvemerge.pl. Here’s what the LogFile directive in my site configuration actually looks like:

1 | LogFile="/usr/share/awstats/tools/logresolvemerge.pl /var/log/nginx/access-th.adde.us.log* |" |

You’ll notice I’m not being clever about only replaying the current and next-newest file… I’m just stuffing the whole set into the tool. If my sites got exceptionally busy, that might not work very well. For now, though, it only takes a couple of seconds to process everything, including the gzipped files.

Resources

The following sites were helpful in refining how I set up AWStats on my server:

- https://awstats.sourceforge.io/docs/awstats_config.html

- https://itman.in/en/install-awstats-multiple-nginx-sites-debian-ubuntu/

- https://larsee.com/blog/2020/06/awstats-on-ubuntu-20-04-with-nginx/

The columns in this log are as follows: remote address, delimiting dash, username (a dash in my logs), timestamp, request method and URL, HTTP status code, bytes sent, HTTP referrer, and the user agent. ↩︎

Check out the AWStats docoumentation for a detailed description of all of the configuration directives. ↩︎